Want to know how much website downtime costs, and the impact it can have on your business?

Find out everything you need to know in our new uptime monitoring whitepaper 2021

Most monitoring setups have the same weak spot.

Detection is easy. Decision-making is not.

StatusCake is good at telling you that something might be wrong. What happens next is where things usually get messy. One alert goes straight to a chat room. Another wakes the wrong person. A third turns out to be a false alarm because the site had a brief wobble and recovered before anyone looked.

Hermes is useful in that gap.

Instead of treating StatusCake as the whole incident workflow, you can put Hermes behind the webhook and use it as a small verification and routing layer. The pattern is simple:

That gives you something better than “dump every alert into a channel” without dragging in a full incident-management platform.

StatusCake detects. Hermes verifies, routes, emails, and remembers.

That is the whole idea.

Because most teams do not want every uptime alert treated the same way.

A down alert at 2:14pm during business hours is not the same as a down alert at 2:14am. A monitor flapping for ten seconds is not the same as a real outage. And when something goes wrong, you usually want a record of what arrived, what Hermes saw, and who got notified.

Hermes gives you a good place to add that logic.

In this setup it does four jobs:

That is enough to make a cheap monitoring stack feel much more operationally sane.

This is intentionally small.

StatusCake

to webhook request

to local receiver beside Hermes

to raw request log

to verification step

to escalation decision

to immediate email notification

to local event history

to daily summary emailThere is no requirement to package this as a formal Hermes skill on day one. If your goal is to get a useful workflow running quickly, an ad hoc setup with a few scripts and a config file is enough.

That is the version I would recommend first.

You could try to build something around email parsing, but that is the wrong direction here.

Webhook delivery is cleaner for three reasons:

In practice, that last bit matters more than people expect. When an alert does not behave the way you thought it would, the first question is usually: did StatusCake send what I think it sent?

If you keep a raw inbound log, you can answer that immediately.

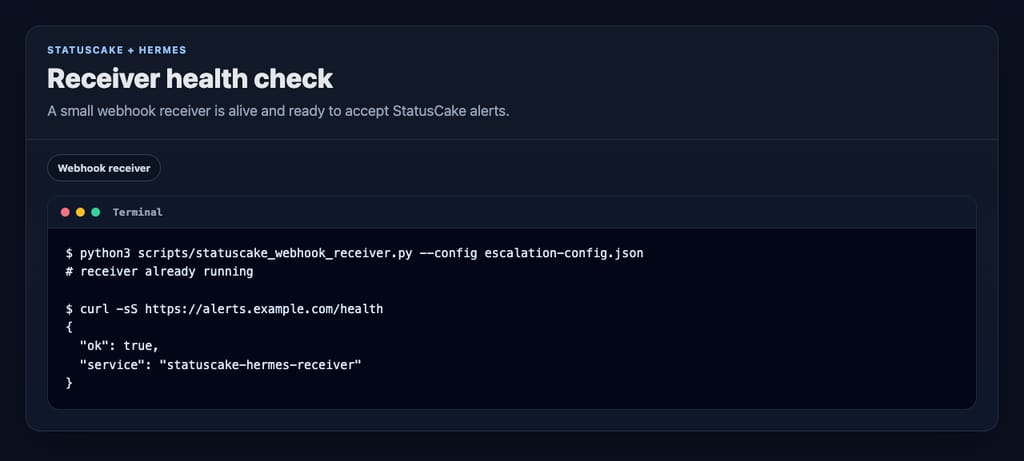

You do not need a huge service for this. A small local HTTP receiver is enough.

python3 scripts/statuscake_webhook_receiver.py

--config var/escalation-config.json

--host 127.0.0.1

--port 8934Expose that locally running receiver with whatever public entrypoint you trust for machine-to-machine requests. For demos, a Cloudflare quick tunnel is usually fine. The important part is that StatusCake can reach a route like this:

https://example-public-host.test/webhooks/statuscake-alertsAnd before you test from StatusCake, check both health endpoints:

http://127.0.0.1:8934/health

https://example-public-host.test/healthOne small gotcha: loading /webhooks/statuscake-alerts in a browser proves nothing. It is a webhook route, not a page. Use /health for browser checks and an actual webhook test when you test the alert path.

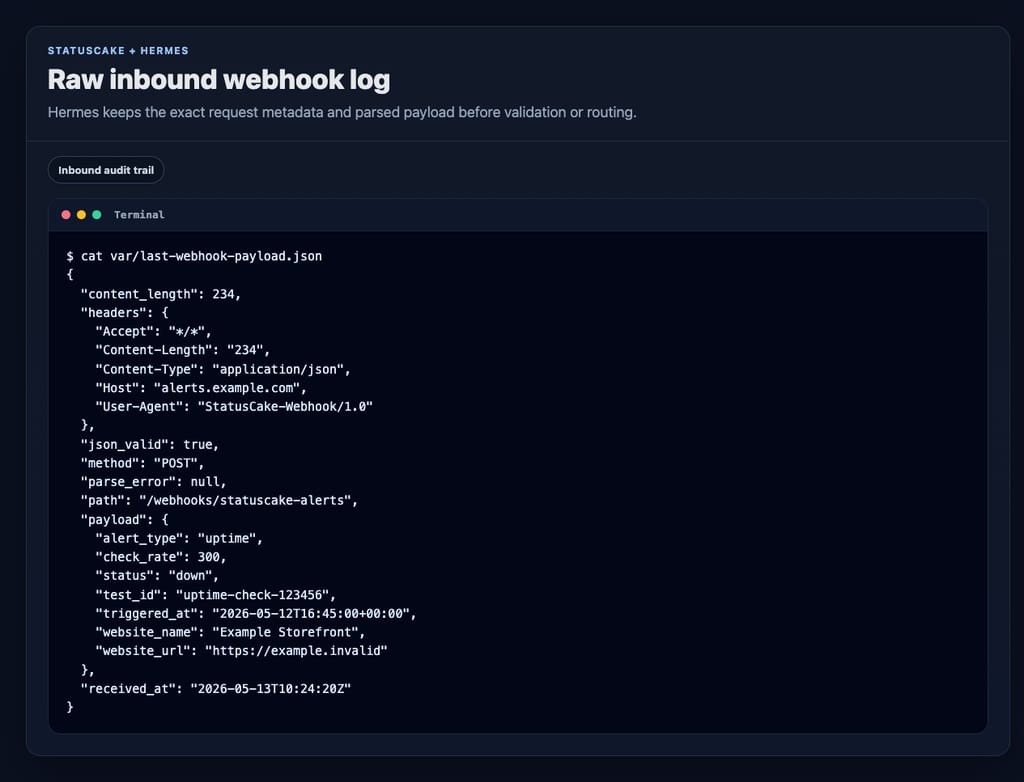

One of the best decisions in this design is to write the inbound request to disk before doing anything clever with it.

That gives you two useful artifacts:

var/last-webhook-payload.json for quick inspectionvar/incoming-webhooks.jsonl as an append-only inbound ledgerThat means you can answer the boring but important questions later:

Without that log, webhook debugging turns into folklore.

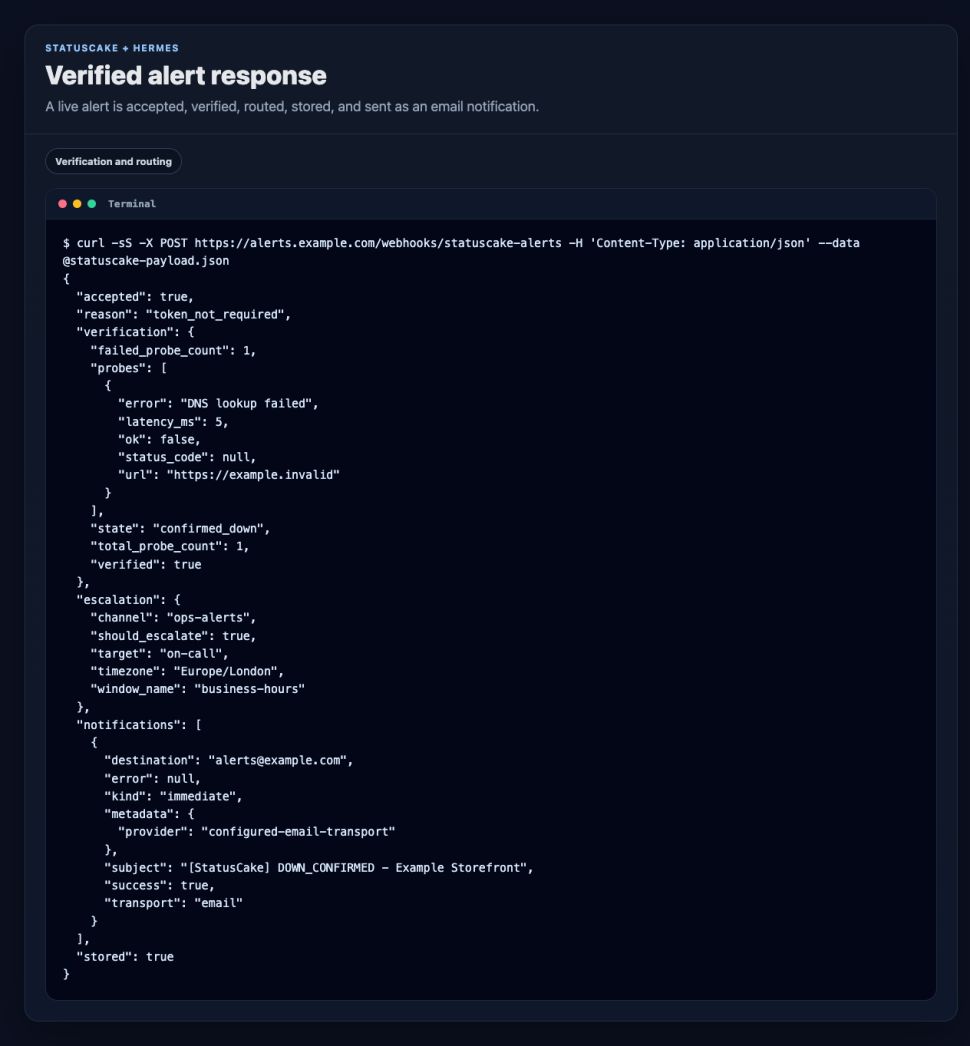

This is the part that makes Hermes more than a relay.

When a down alert comes in, Hermes does not need to panic immediately. It can probe the target itself and decide whether the outage looks real.

A very simple version is enough:

The config supports that directly:

{

"timezone": "Europe/London",

"probe_timeout_seconds": 8,

"min_failed_probes": 1,

"probe_urls": [],

"notifications": {

"immediate": [

{

"type": "email",

"transport": "sendmail",

"from": "statuscake-hermes at localhost",

"to": ["alerts example dot com"],

"events": ["DOWN_CONFIRMED", "UP_CONFIRMED"],

"subject_prefix": "[StatusCake]"

}

]

}

}A detail worth calling out: leaving probe_urls empty is not a bug. In this setup, that tells Hermes to verify against the website URL coming from the StatusCake payload. That is a good default when you want the monitor to carry its own target.

If you do have a better health endpoint than the public homepage, use it.

A lot of teams want basic routing rules but do not want a full paging product yet.

That is fine. Hermes can do the simple version well.

{

"escalation_schedule": {

"windows": [

{

"name": "business-hours",

"start_hour": 8,

"end_hour": 18,

"target": "primary-on-call",

"channel": "discord ops business hours"

},

{

"name": "out-of-hours",

"start_hour": 18,

"end_hour": 24,

"target": "after-hours-escalation",

"channel": "discord ops after hours"

},

{

"name": "night-shift",

"start_hour": 0,

"end_hour": 8,

"target": "night-duty-engineer",

"channel": "discord ops night"

}

]

}

}That is enough to stop every alert from behaving like a fire alarm.

You do not need to overcomplicate delivery either.

For this build, immediate alert delivery is handled through sendmail, which keeps the integration dead simple on a machine that already knows how to send mail.

A confirmed DOWN_CONFIRMED or UP_CONFIRMED event triggers an email right away. In this example, messages go straight to the configured alert recipient.

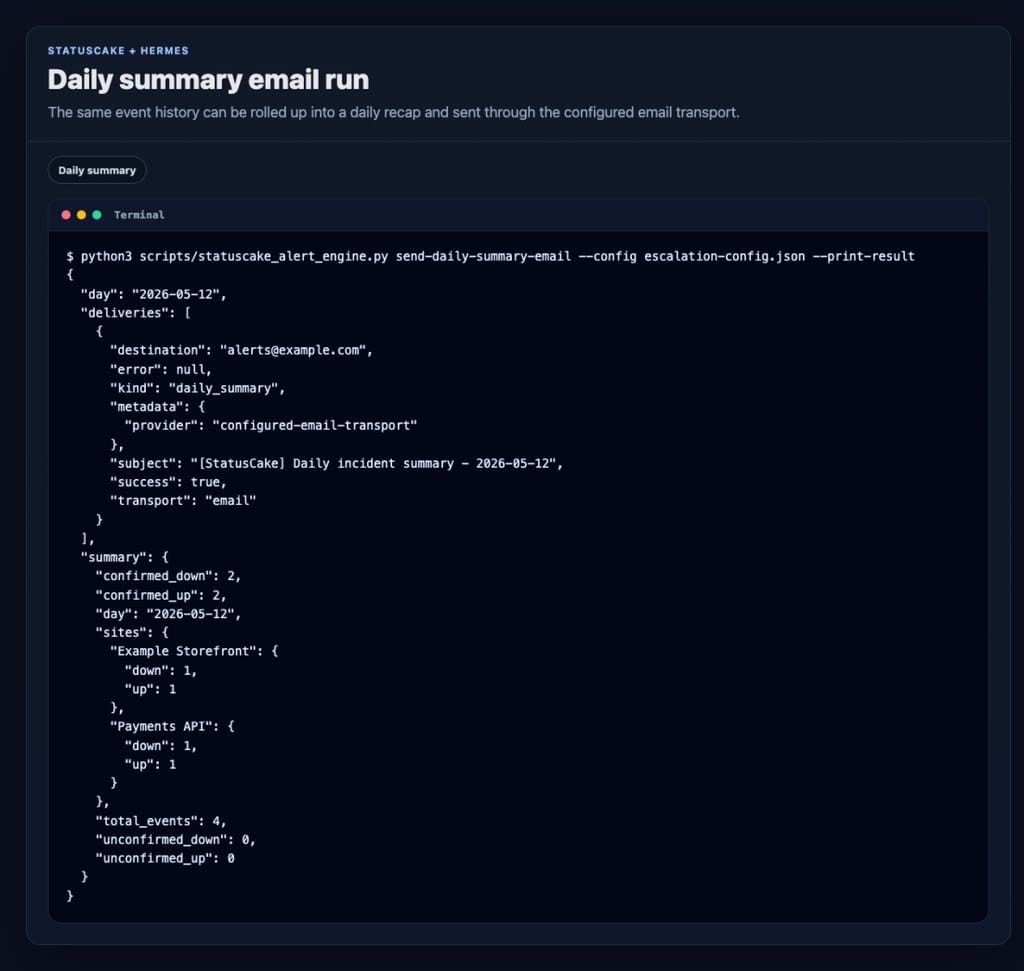

The CLI can also send a daily summary on demand:

python3 scripts/statuscake_alert_engine.py send-daily-summary-email

--config var/escalation-config.json

--print-resultAnd if you want the recap every morning, schedule the wrapper script:

python3 scripts/statuscake_daily_summary_email.pyIn this project, that job is scheduled for 09:00 Europe/London each day.

Every verified event gets appended to var/alerts.jsonl.

That sounds plain because it is plain. That is also why it is useful.

A JSONL file gives you:

You do not need a dashboard before you have a history.

Start with the history.

Once the receiver is up, you can test the full path locally with a small sample payload that looks like a StatusCake alert.

Local webhook route:

http://127.0.0.1:8934/webhooks/statuscake-alerts

website_name: Example Storefront

website_url: https://example.com

status: down

check_rate: 300

test_id: 123456

alert_type: uptimeThe response tells you a lot in one go:

That is the kind of tight feedback loop you want when you are building monitoring workflows.

This pattern is a good fit if you want:

Eventually, yes, probably.

It packages nicely because the idea is easy to explain and easy to demo: StatusCake sends the signal, Hermes verifies it, routes it, sends the email, and keeps the history.

But I would not lead with the packaging story in the blog post.

The stronger story is that you can get real value from a small ad hoc setup with a few scripts, a JSON config, and a local receiver. That is easier for people to copy, easier for people to trust, and easier for them to adapt.

Once that pattern proves itself, turning it into a formal skill is straightforward.

The useful part of this setup is not that Hermes can receive a webhook. Lots of tools can receive a webhook.

The useful part is that Hermes adds judgment after detection.

StatusCake tells you that something might be broken. Hermes can check whether it really looks broken, decide who should hear about it, send the email, and keep the record for tomorrow morning’s summary.

That is a much better workflow than forwarding every alert and hoping the humans sort it out. And it could end up being a lot cheaper than running a full triage/escalation stack via cloud services.

Share this

6 min read StatusCake tells you that something might be broken. Hermes can check whether it really looks broken, decide who should hear about it, send the email, and keep the record for tomorrow morning’s summary.

3 min read The allure of OpenClaw is undeniable. You deploy a highly autonomous, self-hosted AI agent, give it access to your repositories and inboxes, and watch it reason through complex workflows while you sleep. It is the dream of the ultimate 10x developer tool realized. But as any veteran DevOps engineer will tell you: running an LLM-backed

7 min read There are cloud outages, and then there are us-east-1 outages. That distinction matters because failures in AWS’s Northern Virginia region rarely feel like ordinary regional incidents. They tend instead to expose something larger and more uncomfortable: too much of the modern internet still behaves as though one place is an acceptable concentration point for infrastructure,

7 min read Artificial intelligence is making software easier to produce. That much is already obvious. Code that once took hours to scaffold can now be drafted in minutes. Boilerplate, integration logic, tests, refactors and small internal tools can be generated with startling speed. In some cases, even substantial pieces of implementation can be assembled quickly enough to

10 min read Whilst AI has compressed the visible stages of software delivery; requirements, validation, review and release discipline have not disappeared. They have been pushed into automation, runtime and governance. The real risk is not that the lifecycle is dead, but that organisations start acting as if accountability died with it. There is a now-familiar story about

4 min read How AI Is Shifting Software Engineering’s Primary Constraint For most of the history of software engineering, the primary constraint was production. Code was expensive, skilled engineers were scarce, and shipping features required concentrated human effort. Velocity was limited by how fast people could reason, implement, test, and deploy. That constraint shaped everything from team size,

Find out everything you need to know in our new uptime monitoring whitepaper 2021