Want to know how much website downtime costs, and the impact it can have on your business?

Find out everything you need to know in our new uptime monitoring whitepaper 2021

The allure of OpenClaw is undeniable. You deploy a highly autonomous, self-hosted AI agent, give it access to your repositories and inboxes, and watch it reason through complex workflows while you sleep. It is the dream of the ultimate 10x developer tool realized.

But as any veteran DevOps engineer will tell you: running an LLM-backed Node.js agent in production is vastly different from testing it on your local machine.

When traditional web applications break, they usually have the courtesy to throw a hard 500 error or crash entirely. Autonomous agents, however, are notorious for failing silently. They burn through token limits, get trapped in infinite hallucination loops, cannibalize server RAM during heavy context window crunches, or simply abandon cron jobs without logging a single fatal error.

At StatusCake, we’ve watched the community transition from experimenting with OpenClaw to relying on it for mission-critical infrastructure. To make that leap safely, “deploy and forget” is not an option. You need a production-grade observability stack, and more importantly, you need automated triage.

Here is how you build it.

Monitoring an OpenClaw instance requires you to track both the infrastructure health (is the Node process alive?) and the cognitive health (is the agent actually executing tasks?).

If you are only pinging the unauthenticated /health endpoint, you are flying blind. You might know the server is powered on, but you have no idea if the internal SQLite memory database is corrupted or if the agent is deadlocked on an external API rate limit.

To gain true visibility into OpenClaw’s operations, you need a multi-layered approach.

We recommend deploying five specific check types to cover the entire surface area of an OpenClaw deployment:

/api/status endpoint, passing your gateway.auth.token via a Bearer header. This validates that internal routing is functional and the memory database is actively readable.18789 via a reverse proxy to receive external webhooks, an expired SSL certificate or hijacked DNS record is a critical vulnerability.curl payload to your scheduled SKILL.md scripts. If StatusCake doesn’t receive the expected push ping, you know the agent dropped the ball.Deep Dive: Want the exact configuration settings and technical rationale for this setup? Read our comprehensive Knowledge Base guide: Configuring StatusCake Monitoring for OpenClaw Deployments.

Monitoring is only half the battle. If your OpenClaw instance exhausts its server resources at 3:00 AM, an email alert isn’t a solution—it’s an interruption. True autonomy means the system should attempt to heal itself before paging a human operator.

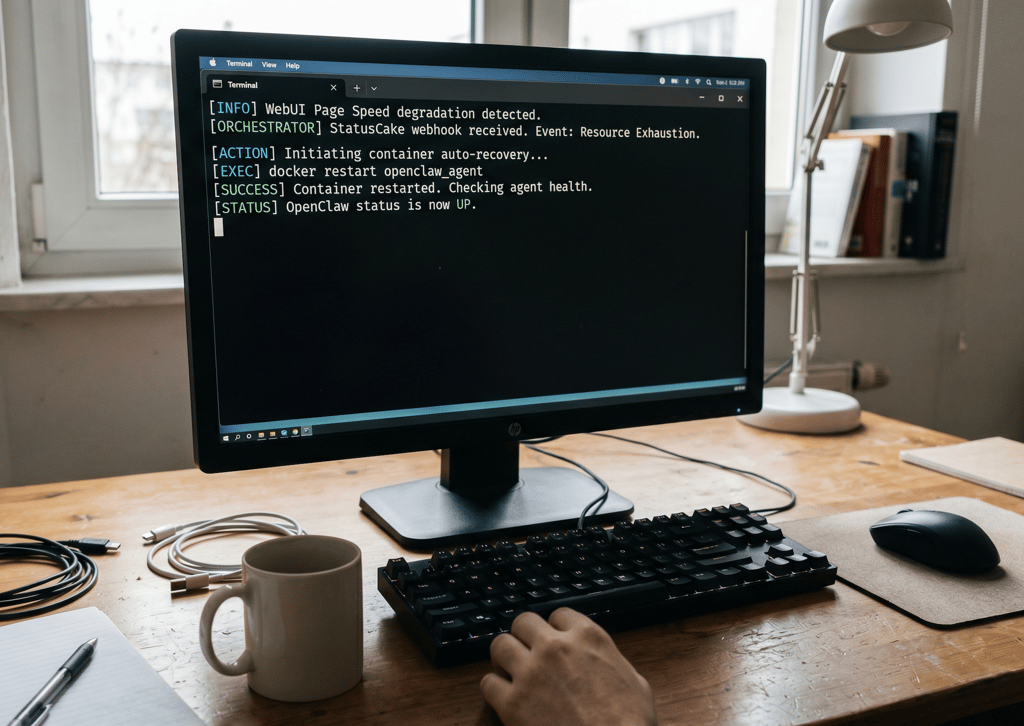

By leveraging StatusCake Webhooks, you can bridge the gap between knowing there is a problem and executing the solution.

Because OpenClaw cannot catch its own lifeline if its gateway has crashed, the architectural secret is to deploy an independent “Orchestrator” (like a lightweight Express server or n8n instance) alongside your agent.

When StatusCake detects degradation, it fires a POST payload to your orchestrator, which then maps the specific alert to a local CLI triage command. For example:

docker restart openclaw to clear memory bloat.openclaw doctor --fix to repair broken session locks and restore routing.Deep Dive: Ready to build your own auto-recovery orchestrator? We’ve provided the architecture mapping and a complete Node.js listener script in our advanced guide: How to Build a Self-Healing OpenClaw Agent using StatusCake Webhooks.

Deploying an AI agent is a massive step forward in workflow automation. But letting a machine execute shell commands, manage databases, and communicate on your behalf without guardrails is a liability.

By layering StatusCake’s global monitoring network over your OpenClaw instance and wiring those alerts into a self-healing webhook architecture, you transform a fragile AI experiment into a resilient, production-ready system.

Set up your checks, configure your orchestrator, and finally let your agent do what it was built to do: operate reliably in the shadows.

Share this

3 min read The allure of OpenClaw is undeniable. You deploy a highly autonomous, self-hosted AI agent, give it access to your repositories and inboxes, and watch it reason through complex workflows while you sleep. It is the dream of the ultimate 10x developer tool realized. But as any veteran DevOps engineer will tell you: running an LLM-backed

7 min read There are cloud outages, and then there are us-east-1 outages. That distinction matters because failures in AWS’s Northern Virginia region rarely feel like ordinary regional incidents. They tend instead to expose something larger and more uncomfortable: too much of the modern internet still behaves as though one place is an acceptable concentration point for infrastructure,

7 min read Artificial intelligence is making software easier to produce. That much is already obvious. Code that once took hours to scaffold can now be drafted in minutes. Boilerplate, integration logic, tests, refactors and small internal tools can be generated with startling speed. In some cases, even substantial pieces of implementation can be assembled quickly enough to

10 min read Whilst AI has compressed the visible stages of software delivery; requirements, validation, review and release discipline have not disappeared. They have been pushed into automation, runtime and governance. The real risk is not that the lifecycle is dead, but that organisations start acting as if accountability died with it. There is a now-familiar story about

4 min read How AI Is Shifting Software Engineering’s Primary Constraint For most of the history of software engineering, the primary constraint was production. Code was expensive, skilled engineers were scarce, and shipping features required concentrated human effort. Velocity was limited by how fast people could reason, implement, test, and deploy. That constraint shaped everything from team size,

5 min read Autonomous Code, Trust Boundaries, and Why Governance Now Matters More Than Ever In Part 1, we looked at how AI has reduced the cost of building monitoring tools. Then in Part 2, we explored the operational and economic burden of owning them. Now we need to talk about something deeper. Because the real shift isn’t

Find out everything you need to know in our new uptime monitoring whitepaper 2021